April 15, 2026

Updated: April 15, 2026

A threat-intelligence-led, attribution-disciplined breakdown of DPRK-linked theft chains and laundering workflows using the latest available public reporting.

Mohammed Khalil

This analysis explains how North Korean hackers stole billions in crypto through a repeatable mix of social engineering, privileged-access abuse, signing-workflow compromise, and disciplined post-theft laundering. Latest available public figures show over $3.4B stolen from crypto platforms during 2025 (January through early December), with a single February exchange compromise accounting for roughly $1.5B.

Within that broader theft picture, multiple independent analytics providers assessed a historically high DPRK-linked share. For example, one major dataset reports $2.02B stolen by North Korean hackers in 2025, following $1.34B in 2024; other official and private-sector reporting uses different attribution thresholds and arrives at different (often lower-bound) totals. The directional signal is consistent: DPRK-linked operations disproportionately drive the extreme end of annual loss distributions.

The operational significance goes beyond headline totals. For exchanges, custodians, and VASPs, the risk is concentrated in (a) operator identity and access paths, (b) key-generation, storage, and signing workflows, and (c) the ability to detect and interrupt laundering within hours before off-chain conversion makes recovery impractical. The same patterns also create sanctions and compliance exposure when stolen funds touch high-risk rails, and they raise incident response complexity because “theft” and “laundering” are distinct phases with different containment levers.

North Korean crypto theft refers to cryptocurrency theft activity publicly attributed or linked by credible intelligence, law-enforcement, or blockchain-analysis sources to DPRK-linked actors, typically involving exchange or wallet compromise, operational deception, and structured laundering workflows designed to move and convert stolen digital assets at scale.

When analysts say “North Korea stole billions,” they are usually combining three different measurement types that should not be conflated:

Latest available platform analytics show a sharp step change in 2025, with total stolen value rising materially because one or two catastrophic events dominated the annual total. Outlier events distort year-over-year comparisons: you can have fewer incidents overall, but higher aggregate losses if the largest compromise is extreme. That is exactly the kind of concentration risk that state-linked operators exploit because it yields strategic-scale returns from a small number of successful operations.

| Metric | 2024 | 2025 or Latest Available | Trend | Notes |

|---|---|---|---|---|

| Total stolen from crypto platforms (all actors) | $2.2B | >$3.4B | Up | 2025 figure reflects Jan–early Dec reporting window |

| DPRK-linked stolen (consistent dataset) | $1.34B | $2.02B | Up | Attribution varies by provider; figures represent assessed lower bounds |

| Largest single publicly reported theft | ~$305M | ~$1.5B | Up | Outliers dominate annual totals |

| Value stolen from individual victims | Peak ~$1.5B | $713M | Down | Incident counts can rise even when average take per victim drops |

| Observed laundering tempo after major DPRK-attributed hacks | Multi-week | ~45 days | Stable | Typical pattern when funds are actively laundered on-chain, not via immediate off-chain sale |

Interpreting these numbers requires discipline. “Total stolen” is usually measured at the time of theft (USD equivalents), not after price changes. “DPRK-linked” is an attribution claim; it can strengthen over time (more evidence) or weaken (re-attribution), and different firms apply different thresholds and address clustering approaches. Official government statements sometimes cite specific incidents and amounts, while sanctions-monitoring reports may publish conservative “at least” sums with explicit caveats that totals may be higher.

From a threat-economics perspective, crypto offers a state-linked revenue generator with three properties that are difficult to replicate through conventional cybercrime: portable value at global scale, fast cross-border movement, and partial irreversibility once assets move through non-cooperative or opaque off-ramps. Those characteristics are repeatedly referenced in government statements that link DPRK cyber theft and laundering to revenue generation under sanctions pressure.

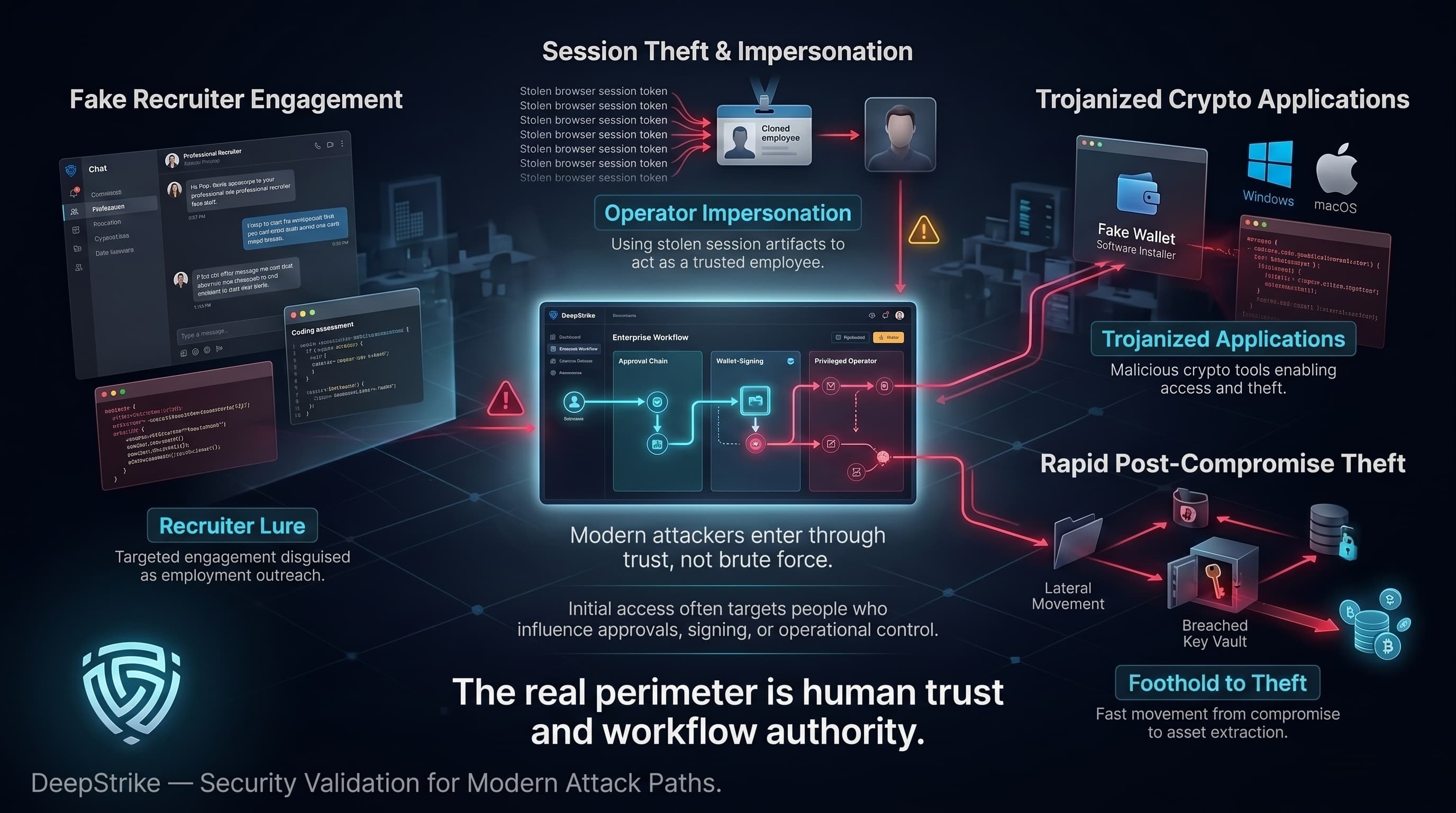

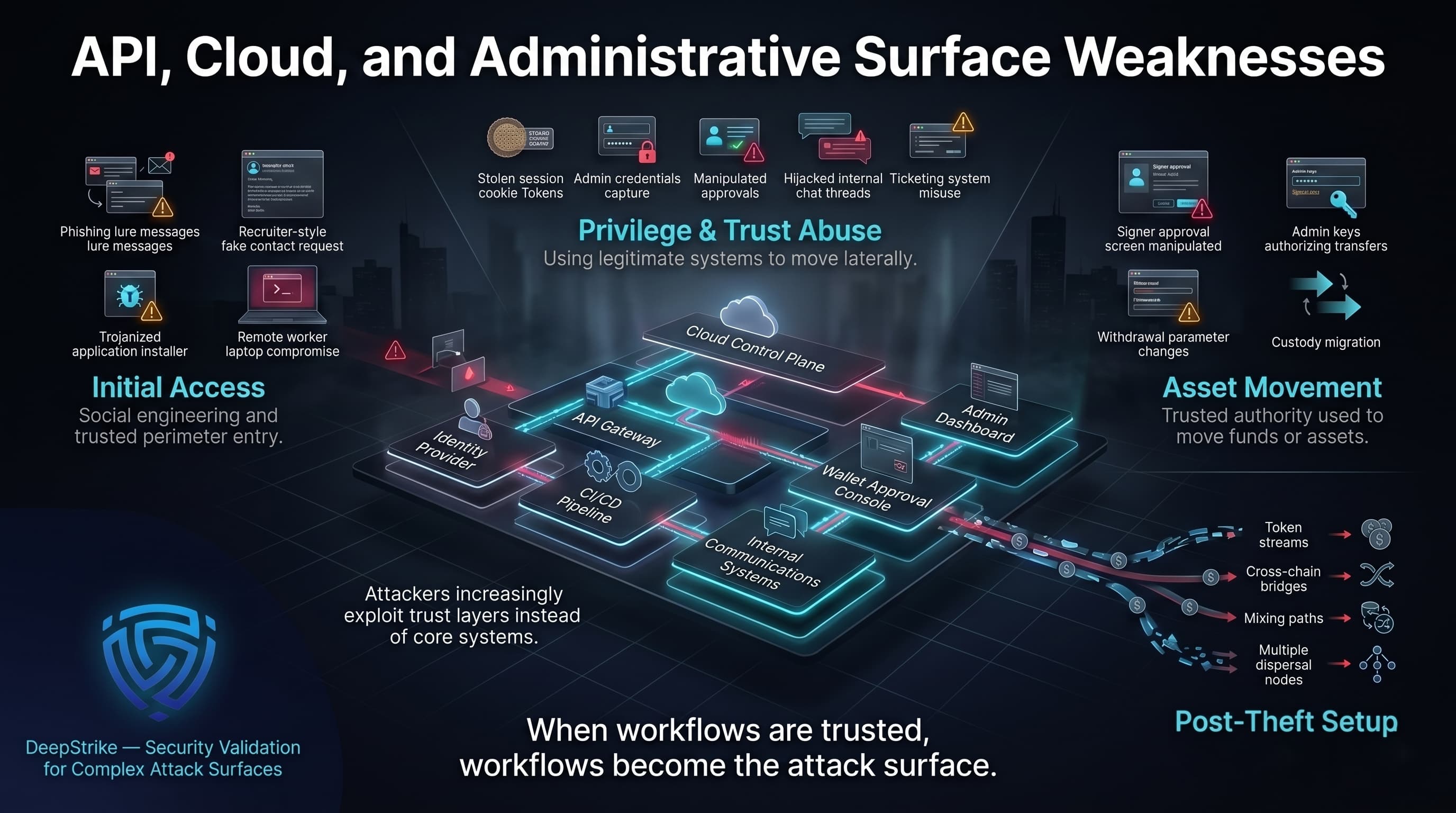

Centralized exchanges, custodians, and large treasuries are attractive because they concentrate liquidity behind a small set of operational workflows: signer devices, approval interfaces, administrative keys, and withdrawal governance. A successful compromise does not need to “break cryptography” or exploit blockchain consensus; it needs to compromise the humans and systems that decide what gets signed. That is why major public reporting on recent large thefts emphasizes social engineering, workflow manipulation, and privileged access rather than purely on-chain technical flaws.

Crypto also creates a second-order incentive: laundering infrastructure can be industrialized. Enforcement actions and financial intelligence findings increasingly focus on facilitators (mixers, laundering marketplaces, high-risk exchanges) because they function as recurring conversion rails for multiple crime types, including DPRK-linked theft.

The most consistent initial-access pattern across publicly described DPRK-linked crypto theft cases is social engineering targeting people who can influence signing, approvals, or operational trust.

A high-confidence, government-documented example is the 2024 theft from DMM Bitcoin-related infrastructure: an actor posing as a recruiter contacted a wallet-software employee and delivered a malicious script framed as a pre-employment test, later using stolen session artifacts to impersonate the employee and influence a legitimate transaction request workflow. This is not “spray-and-pray phishing” , it is targeted engagement designed to land inside an access path that the victim organization treats as trusted.

A complementary government advisory describes a broader pattern where targets are induced to download trojanized cryptocurrency applications for Windows or macOS; the malware then enables access, lateral movement, and theft of private keys or other sensitive assets. The same advisory emphasizes that post-compromise activity is tailored to the victim environment and can complete quickly after initial access, underscoring operational readiness to move from foothold to theft.

A second access vector is “insider-like” access achieved through fraudulent remote employment and contractor positioning. This is not hypothetical: U.S. law enforcement actions describe DPRK-linked schemes where overseas operators use stolen or synthetic identities to obtain remote IT jobs, route access through U.S.-based “laptop farms,” and generate revenue while gaining access to sensitive systems (including virtual currency environments).

In one adjudicated case, Christina Marie Chapman was sentenced for enabling a scheme that placed DPRK-linked remote workers into IT positions across hundreds of companies and generated more than $17M in illicit revenue, illustrating the operational feasibility of building long-lived footholds inside legitimate corporate environments.

For exchanges and Web3 infrastructure firms, the security implication is direct: remote-worker fraud is both a revenue stream and a pipeline for privileged access mapping. Law enforcement reporting explicitly notes that, once employed, operators can access sensitive information and, in some cases, steal virtual currency, framing this as a combined fraud-and-intrusion risk rather than a pure HR problem.

High-value theft at exchange scale typically requires bypassing custody controls. Modern DPRK-linked operations repeatedly demonstrate that the “hard part” is not on-chain transfer mechanics — it is getting cryptographically valid authorization to move assets.

The 2025 Bybit compromise is the clearest public example of a signing-workflow failure mode: investigators and multiple public disclosures describe an attack where the interface used to prepare and review a multisig transaction was manipulated, resulting in signers approving a transaction different from what they believed they were signing. The outcome was a loss of approximately $1.5B in virtual assets, with federal law enforcement directly attributing responsibility to North Korea and tying the activity to “TraderTraitor.”

This class of incident matters because it breaks a common executive assumption: “We have hardware wallets and multisig; we are safe.” Hardware-backed signing reduces key-exfiltration risk, but it does not inherently prevent intent manipulation when signers rely on a compromised UI or cannot fully interpret complex transaction payloads at signing time. Post-incident analysis from security vendors and hardware-wallet manufacturers highlights UI deception and the need for device-level clarity in what is being authorized.

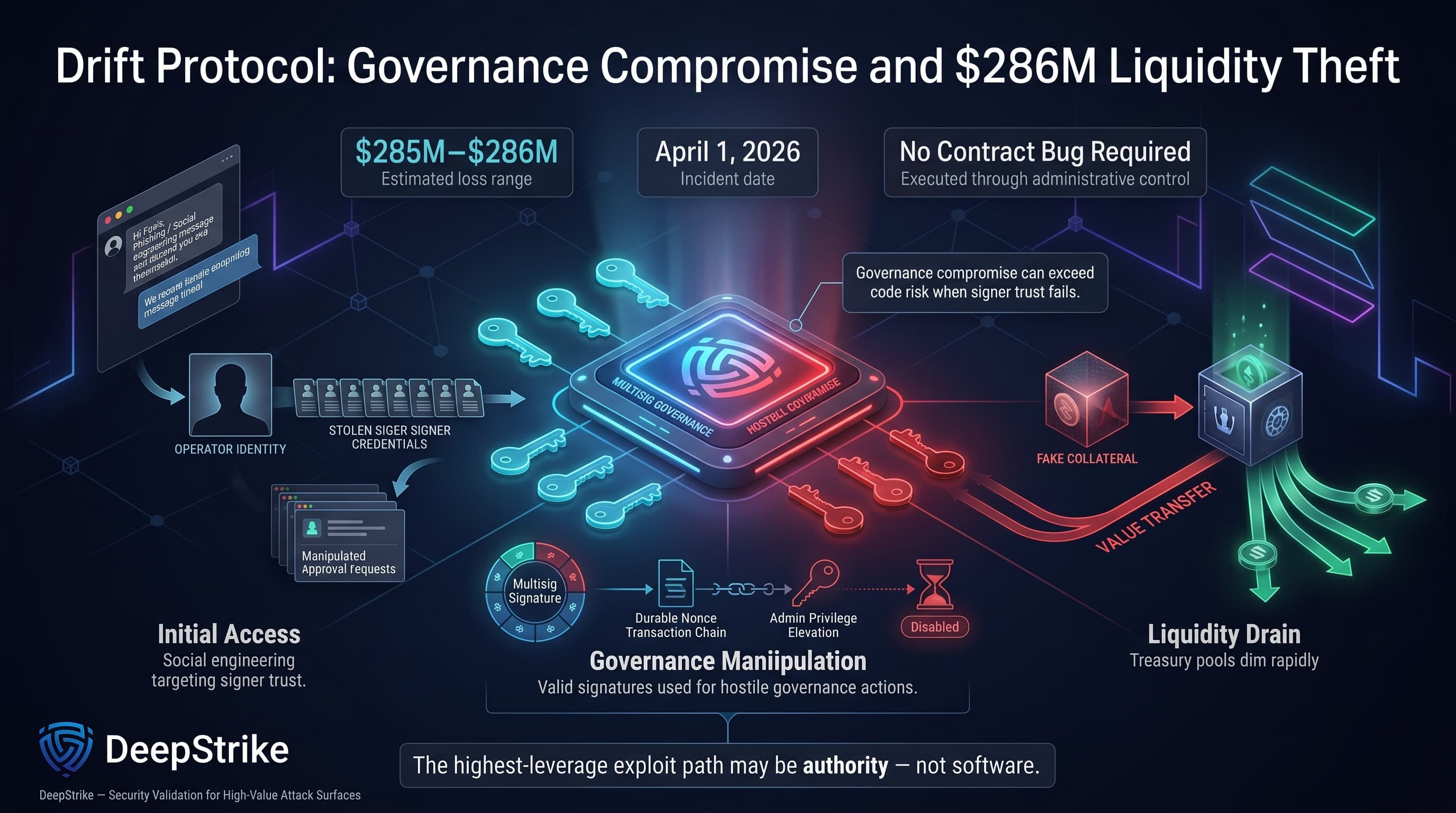

A parallel pattern is visible in DeFi governance compromises where attackers obtain administrative control and then use the protocol “as designed” to extract value (e.g., raising limits, whitelisting collateral, changing parameters). The Drift incident reporting emphasizes multisig governance compromise and durable nonces (pre-signed transactions executed later), demonstrating that “no smart contract bug” does not mean “no exploit.”

The enabling layer for many of these thefts is Web2 infrastructure: identity, cloud credentials, build pipelines, and identity governance.

In the Bybit case, independent investigations describe compromise of a developer workstation followed by abuse of cloud access (tokens/sessions) to alter front-end resources served to signers. This is a supply-chain style compromise: attackers do not need to breach the exchange core directly if they can compromise a trusted dependency that the exchange uses for critical wallet operations.

In the DMM Bitcoin-related case, compromise hinged on session cookie data and access to an unencrypted communications system used to coordinate wallet management actions — a reminder that “operational comms” and “workflow tooling” are part of the attack surface when they are used to approve or coordinate transactions.

A practical way to model the end-to-end theft chain (without reducing it to one incident) is:

Crypto-theft risk is structurally non-linear. A single compromise of a high-value signing workflow can dominate annual totals, which is why “incident count” is a weak proxy for systemic risk.

Latest available platform analytics highlight that the ratio between the largest hack and the median incident crossed the 1,000x threshold in 2025, and that the top three hacks accounted for 69% of all service losses. This is concentration risk: the distribution has a long tail driven by rare but catastrophic compromises.

For executive risk committees, the implication is that “average incident loss” thinking is misleading. The relevant control question becomes: What is the probability of one catastrophic signing or custody-control failure, and what is our blast radius if it happens? That reframes the problem from “prevent a hack” to “prevent a catastrophic authorization event, and if it occurs, contain it within minutes.”

The theft is phase one. Phase two is converting highly traceable, high-profile stolen assets into usable value while surviving compliance pressure, address flagging, and stablecoin freezes.

Recent public reporting emphasizes a consistent laundering cadence for DPRK-attributed hacks when funds are actively moved on-chain: an initial burst of obfuscation and routing, followed by staged integration into services that can support cross-chain fragmentation and eventual cash-out. One large dataset describes a typical multi-wave laundering pathway unfolding over roughly 45 days after major DPRK-attributed thefts, while also noting that some funds may sit dormant for extended periods depending on operational objectives and scrutiny.

Federal law enforcement disclosure around the 2025 Bybit theft describes rapid conversion of some stolen assets into bitcoin and other assets dispersed across thousands of addresses on multiple blockchains, with an expectation of further laundering and eventual conversion to fiat currency. This “early dispersion” is not the end state; it is a time-buying mechanism that complicates immediate interdiction.

At the ecosystem level, enforcement and financial intelligence reporting show why on-chain visibility can be insufficient: key parts of the conversion chain can occur through intermediaries and service providers that specialize in settlement, escrow, or off-chain conversion. For example, FinCEN’s actions against a Cambodia-based financial group describe a network used to launder proceeds from DPRK cyber heists alongside broader cyber-scam activity, highlighting how laundering infrastructure can serve multiple illicit markets with weak or absent AML controls.

| Laundering Phase | Approximate Timing | Main Objective | Typical Services / Behaviors | Defensive Relevance |

|---|---|---|---|---|

| Immediate dispersion | Hours to days 0–5 | Break direct provenance; create address volume | Fragmentation into many transfers; cross-chain movement; initial obfuscation | Alerting on post-exploit heuristics; rapid watchlisting and interdiction |

| Transitional integration | Days 6–10 | Move toward liquid rails while avoiding blocks | Bridge usage; swaps into more liquid assets; movement through intermediaries | Cross-chain correlation; bridge risk scoring; stablecoin issuer coordination |

| Long-tail conversion | Days 20–45 | Reach cash-out pathways | Higher-risk service touchpoints; escrow/OTC settlement; indirect exchange exposure | Typology-based detection; monitoring for known laundering hubs and patterns |

| Off-chain settlement | Variable | Convert to fiat or goods | OTC brokers, settlement networks, and non-transparent intermediaries | Intelligence sharing; KYC/EDD rigor; disruption of facilitators |

A few technical characteristics repeatedly appear in public analytics describing DPRK-linked laundering:

| Attribute | DPRK-Linked Activity | Other Crypto Threat Actors |

|---|---|---|

| Primary Objective | State-linked revenue generation and strategic sustainment | Profit-motivated crime, often opportunistic or market-driven |

| Typical Theft Scale | Skewed toward very large events; high concentration in top incidents | Broader distribution; fewer state-scale outliers |

| Preferred Laundering Behaviors | High fragmentation; structured, multi-wave cycle; strong cross-chain and specialized network preference | More heterogeneous; often larger tranche sizes and more direct use of mainstream liquidity |

| Operational Patience | Willingness to delay laundering, stage access, and build trust over months | Often faster monetization pressure, especially in purely criminal operations |

| Service Preferences | Notable reliance on specialized settlement ecosystems and facilitators | More variable; includes direct exchange usage, DeFi arbitrage, and other patterns |

| Strategic Risk to Victims | Systemic: sanctions exposure, regulatory scrutiny, and catastrophic liquidity loss | Typically financial loss and operational disruption, but less state-level compliance entanglement |

This distinction matters because it changes what “good detection” looks like. If you tune monitoring for large, obvious transfers, you can miss high-frequency fragmentation and cross-chain fan-out. If you focus only on DeFi exploit signatures, you can miss governance and signing compromises that use valid signatures. And if you treat laundering as purely on-chain, you can underweight off-chain settlement risk that enforcement actions repeatedly highlight.

Latest available reporting shows target selection has shifted over time in ways that align with attacker adaptation and defender improvements.

In 2024, one major dataset observed a pivot where centralized services were heavily targeted in Q2 and Q3, and where private key compromises represented the largest share of stolen crypto for the year. That is consistent with a threat model where exchanges are breached not by “breaking DeFi,” but by breaking the custodial workflows that control keys and approvals.

In 2025, reporting emphasizes escalating severity of centralized-service compromises (with infrequent but enormous losses) alongside a surge in personal wallet compromise incident counts. At the same time, DeFi hack losses were described as suppressed relative to rising TVL, suggesting improvements in some DeFi defensive practices and potential target substitution by attackers.

The 2026 Drift incident reinforces an additional shift: governance and operational control-layer attacks can produce DeFi-scale losses without exploiting a code vulnerability. If administrative protections such as timelocks are absent or bypassed, “valid signatures” can authorize catastrophic parameter changes and withdrawals.

| Target Type | Trend Signal | Why It Matters | Notes |

|---|---|---|---|

| Centralized exchanges / custodians | Higher severity, lower frequency | Single failures dominate annual totals | Focus on signing integrity and workflow governance |

| Individual wallets | Higher incident count | Expands victim surface and fraud pipelines | Value per victim can vary year to year |

| DeFi protocols | Control-layer attacks persist | Governance compromise can outperform code exploits | Timelocks and intent monitoring become critical |

What happened: Federal law enforcement reported the theft of approximately $1.5B in virtual assets from the exchange in February 2025 and attributed responsibility to North Korea, labeling the activity “TraderTraitor.” Attack path (publicly described): Investigations and post-incident analysis describe compromise of a trusted wallet-management interface and transaction intent manipulation, resulting in authorized signers approving a malicious transaction. Independent reporting describes modification of front-end resources served to signers and rapid post-execution cleanup, illustrating supply-chain compromise against an operational dependency rather than a direct on-chain protocol flaw. Loss/impact: Approximately $1.5B, described as the largest digital asset heist in public reporting for that period; also cited as a dominant driver of 2025 theft totals in at least one major dataset. Why it matters strategically: It demonstrates that multisig and “cold storage” models can fail at the human-interface layer, and that third-party dependency compromise can create single points of catastrophic failure if transaction intent is not independently verifiable at signing time.

What happened: Public analytics and incident reporting describe an approximately $285M–$286M theft from the Solana-based protocol on April 1, 2026, with multiple indicators assessed as consistent with DPRK-linked techniques; several sources explicitly note that formal attribution was still developing as investigations progressed. Attack path (publicly described): Reporting emphasizes a control-layer compromise: social engineering and multisig governance manipulation (including durable nonces), followed by administrative takeover and the use of a fabricated collateral asset to extract real liquidity — executed via valid signatures rather than a contract “bug.” Loss/impact: Approximately $285M–$286M, described as a major DeFi exploit and notable for speed of execution once admin control was obtained. Why it matters strategically: It illustrates that governance and signer compromise can be a higher-leverage attack surface than code vulnerabilities, and that removing or weakening administrative guardrails (e.g., timelocks) collapses the response window that makes intervention possible.

What happened: U.S. and Japanese authorities publicly identified a $308M cryptocurrency theft in May 2024 and associated it with TraderTraitor activity, describing specific initial-access and workflow-manipulation steps. Attack path (publicly described): The public description includes a recruiter lure, delivery of malicious code under the guise of a hiring test, theft of session artifacts, impersonation within operational systems, and manipulation of a legitimate transaction request — showing how “normal business processes” can be weaponized into a theft path. Loss/impact: 4,502.9 BTC valued at $308M at time of theft (as described in the public statement). Why it matters strategically: It provides a rare, high-specificity view into how a targeted social engineering foothold can translate into influence over wallet-management workflows without requiring a loud, multi-exploit intrusion into core infrastructure.

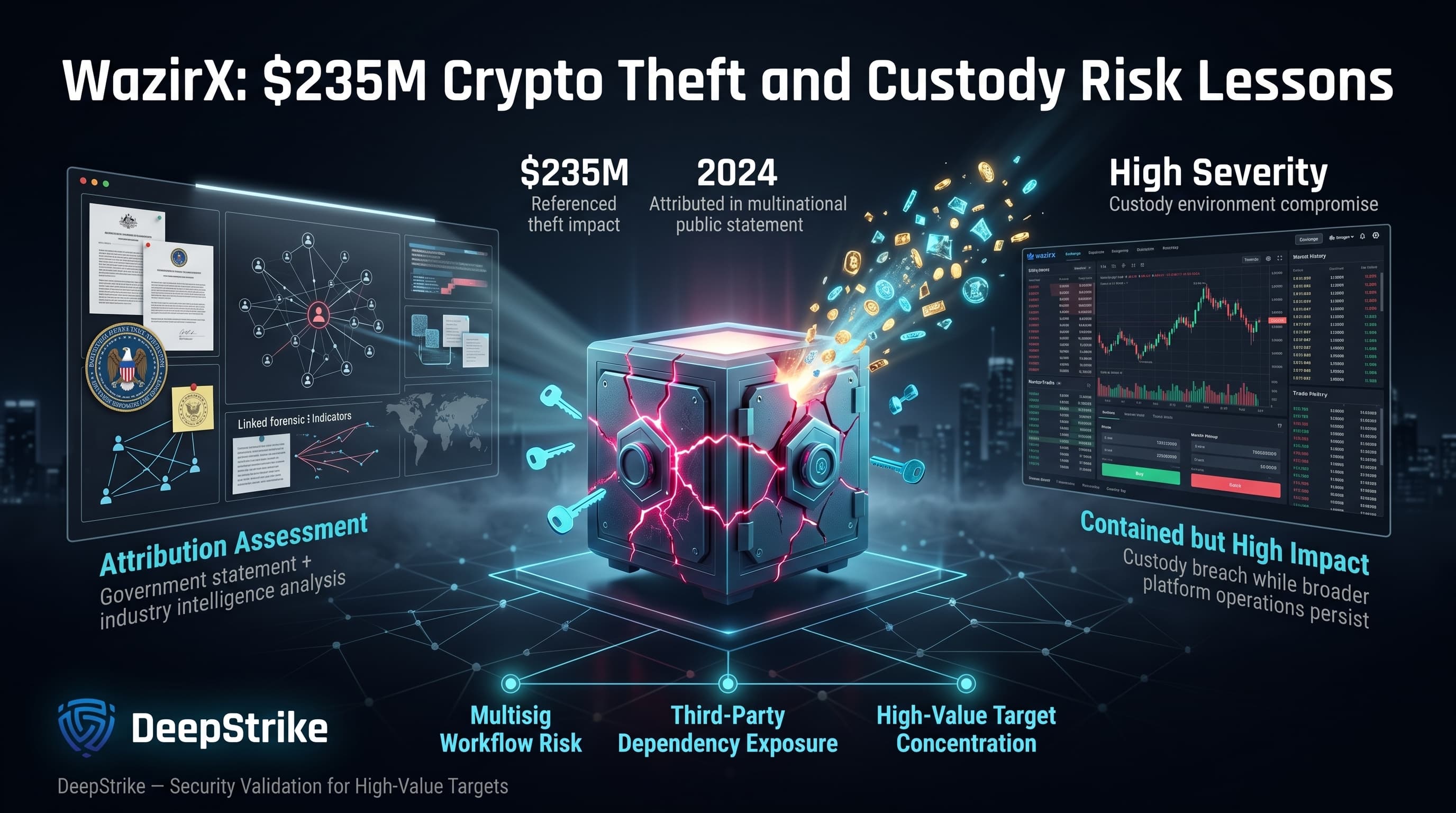

What happened: A multinational government joint statement attributed multiple 2024 thefts to DPRK actors and additionally attributed (based on detailed industry analysis) a $235M theft against WazirX to the DPRK, reflecting assessment language rather than a claim of universal consensus. Attack path (publicly described): The exchange’s own preliminary report describes a cyberattack affecting a multisig wallet; public reporting and ongoing investigation narratives have included competing theories around custody workflows and external dependencies, so definitive root-cause claims should be treated cautiously unless validated by final forensic findings. Loss/impact: $235M (as referenced in the joint statement attribution list for 2024 thefts). Why it matters strategically: It shows how attribution can rest on a combination of government assessment and private-sector analysis, and it reinforces that multisig custody environments can be high-impact targets even when the broader exchange stack remains operational.

What happened: Treasury sanctions reporting and public investigations tied the March 2022 Ronin bridge theft associated with Axie Infinity to DPRK-linked actors.

Attack path (publicly described at high level): Compromise of validator control sufficient to authorize fraudulent withdrawals, followed by laundering through mixer infrastructure and additional conversion rails.

Loss/impact: Almost $620M at the time of reporting.

Why it matters strategically: It remains a baseline case for bridge-scale theft plus sanctions-targeted laundering, and it shows how validator or governance compromise can produce catastrophic losses at ecosystem scale.

For security leadership, DPRK-linked crypto theft is best treated as a low-frequency, high-impact operational integrity risk rather than a conventional “cyber incident rate” problem. The critical failure modes occur at the intersection of identity, privileged access, and custody-control failure — especially where third-party dependencies and UI-based approvals exist.

For platform engineering, the recurring lesson is that Web2 controls (build pipelines, cloud credential security, device posture, secure delivery of critical interfaces) are inseparable from Web3 security outcomes. Public post-mortems describe attacker success via compromised front-end delivery and stolen session artifacts — precisely the kinds of failures that are invisible if a security program focuses only on smart contract auditing.

For wallet operations, the operational objective is transaction intent integrity:

For compliance teams, the strategic shift is from static blocklists to typology-driven detection across chains, because adversaries deliberately fragment and route flows to defeat simplistic controls. Enforcement actions against laundering nodes (e.g., high-risk exchanges and escrow/marketplace networks) provide an important signal: treat exposure to these hubs as a first-class risk indicator, not a last-step after-the-fact flag.

For incident response leaders, the response model must assume that the first hours are decisive. Government advisories describe rapid dispersion across addresses and chains after major thefts, and analytics describe multi-wave laundering cycles; both imply that prepared, pre-authorized response paths (freezing requests, exchange-to-exchange coordination, bridge alerts, internal kill-switches) are required to meaningfully increase recovery probability.

Effective reduction of DPRK-linked theft risk comes from hardening the points where the attacker’s model requires trust: identity, privileged access, and authorization integrity.

Strengthen operator identity verification and remote-work controls. Treat remote-worker fraud as a security control problem: identity verification, device attestation, geolocation anomalies, and strict restrictions on which roles can touch custody workflows. DOJ reporting on remote IT worker schemes and “laptop farm” facilitation shows that adversaries can persist at scale when hiring, onboarding, and device-location assumptions are weak.

Harden privileged workflow paths for custody operations. Separate duties between transaction creation, approval, and execution; require multi-party review for changes to governance parameters; and implement step-up authentication for any action that changes signer sets, limits, whitelists, or timelock behavior. Drift incident analysis shows how control-plane misuse can be catastrophic even with valid signatures.

Reduce UI-based trust with transaction legibility at signing time. Treat “what the signer sees” as a hostile interface. Post-incident analysis of the Bybit case stresses that the interface layer can be the decisive weakness and promotes clear, device-verified signing as a mitigation direction.

Secure third-party dependencies and interface delivery. Apply software supply chain controls (build signing, integrity checks, dependency pinning, hardened CI/CD, continuous monitoring of hosted assets) for any wallet UI, admin console, or signing coordinator. Investigations into the Bybit incident describe modification of delivered front-end resources, illustrating why CDN/S3-style delivery chains must be monitored as critical infrastructure.

Implement intent-aware transaction policy enforcement. For both CEX and DeFi contexts, monitor and block abnormal administrative intent (e.g., disabling timelocks, whitelisting new collateral, raising limits sharply, changing admin keys) before execution. Public analysis around the Drift incident highlights the need for pre-execution evaluation of governance actions.

Build multi-chain laundering detection tied to typologies. Analytics on DPRK laundering emphasize fragmentation, bridge usage, and specialized service preferences, plus multi-wave timelines. Detection should correlate flows across chains and treat exposure to known high-risk laundering hubs as a rapid-escalation signal.

Run continuous penetration testing and custody-focused tabletop exercises. DPRK-linked cases repeatedly demonstrate that the decisive failures are operational and human-process driven: recruitment lures, session hijacking, workflow manipulation, and third-party compromise. Tabletop exercises should simulate “signing interface compromise” and “governance admin takeover” scenarios, not only “smart contract exploit” scenarios.

Adopt baseline mitigations that consistently appear in government guidance. Joint advisories recommend defense-in-depth, timely patching, MFA, and user education against social engineering because these remain dominant entry points. These are foundational controls, but they must be applied specifically to roles and systems that touch custody and admin workflows.

The latest available public analytics report approximately $2.02B stolen by DPRK-linked actors in 2025, with multi-year lower-bound totals reported in the multi-billions; official reporting and other analytics providers publish different conservative ranges based on attribution thresholds.

The repeatable model is: targeted initial access (often social engineering) → privilege/trust abuse inside operational tooling → compromised signing or admin authority → rapid dispersal and laundering, sometimes across multiple chains.

They concentrate liquidity behind a small number of operational controls (signers, admin keys, withdrawal governance). If transaction intent can be manipulated at signing time, “cold storage” assumptions can fail despite institutional controls.

Public analytics describe high fragmentation, heavy cross-chain movement, and a multi-wave pattern that often unfolds over weeks (around a 45-day window) when active on-chain laundering occurs, while law enforcement reporting describes rapid early dispersion across many addresses and chains.

Latest available reporting suggests DeFi losses have been suppressed relative to TVL in 2024–2025 in at least one dataset, but major control-layer governance compromises (e.g., multisig/admin takeover) can still produce very large losses.

They are a documented initial-access method: in a high-confidence case, authorities described a recruiter persona delivering malicious code as a hiring test, later enabling session hijacking and transaction workflow manipulation.

Prioritize transaction intent integrity (clear, device-level verification), hard separation of custody duties, strict controls on administrative changes, continuous monitoring of critical UI/supply-chain dependencies, and rehearsed multi-chain incident response.

Because the distribution is outlier-driven: a small number of catastrophic events can dominate total losses, and attribution datasets are updated as new evidence strengthens or weakens linkages to specific actors.

The latest available public reporting does not support a single-cause explanation for DPRK crypto theft. The pattern is an operational model: disciplined initial access (often social engineering), conversion of trust into privileged workflow control, exploitation of signing and governance blind spots, and structured laundering designed to survive compliance pressure and interdiction attempts.

For defenders, the most actionable framing is not “stop a hack,” but “protect authorization integrity.” That means making transaction intent verifiable at signing time, hardening administrative pathways and timelocks, controlling remote-worker and contractor access, and building multi-chain laundering detection that reflects DPRK-specific typologies rather than generic fraud patterns.

Ultimately, how North Korean hackers stole billions in crypto is best understood as a repeatable sequence that exploits ecosystem-level weaknesses: people, process, and dependency trust. Exchanges and VASPs that build controls around that sequence — especially around signing legibility, privileged access governance, rapid incident containment, and multi-chain laundering detection — reduce both theft probability and catastrophic blast radius.

Mohammed Khalil is a Cybersecurity Architect at DeepStrike, specializing in advanced penetration testing and offensive security operations. With certifications including CISSP, OSCP, and OSWE, he has led numerous red team engagements for Fortune 500 companies, focusing on cloud security, application vulnerabilities, and adversary emulation. His work involves dissecting complex attack chains and developing resilient defense strategies for clients in the finance, healthcare, and technology sectors.

Stay secure with DeepStrike penetration testing services. Reach out for a quote or customized technical proposal today

Contact Us