April 14, 2026

Updated: April 14, 2026

A data-led view of how generative AI is amplifying phishing, impersonation, fraud, and identity abuse and how enterprises should interpret the numbers.

Mohammed Khalil

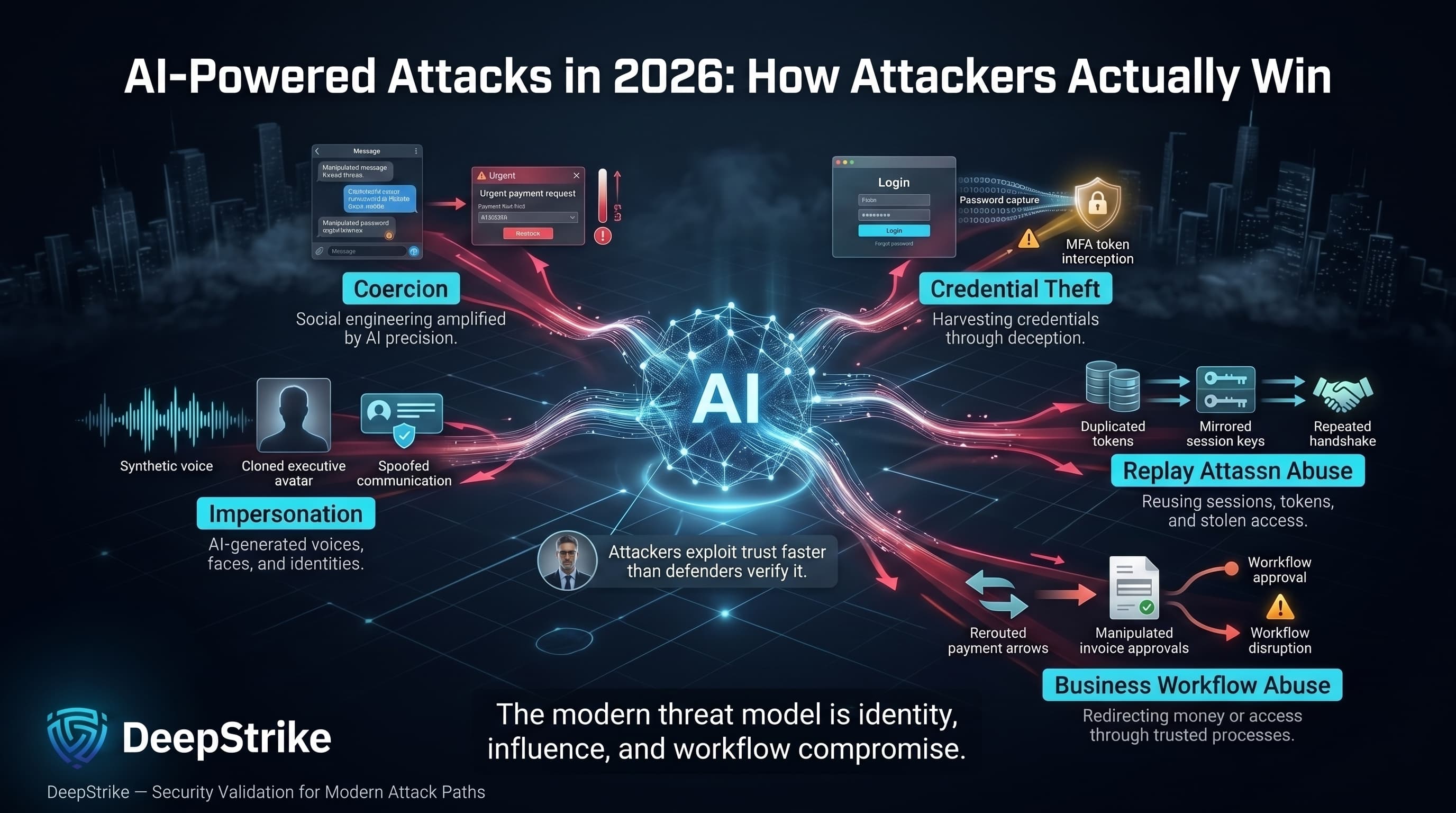

The 2026 conversation about air-powered attacks statistics is finally anchored in measurements that reflect how attackers actually win: they impersonate, they coerce, they harvest or replay credentials, and they redirect money or access through business workflows.

Three data points frame the problem. First, the FBI’s complaint dataset for 2024 (US reporting) recorded 859,532 complaints and $16.6B in reported losses showing how large the fraud and cyber-enabled crime surface remains even before you isolate AI as a factor. Second, IBM/Ponemon breach research reports that 16% of breaches involved attackers using AI, with AI-driven tactics concentrated on phishing and deepfake-enabled manipulation rather than novel exploitation at scale. Third, Verizon’s breach investigations show synthetically generated text in malicious emails has doubled over two years, while 15% of employees accessed GenAI systems on corporate devices routinely, often outside centralized identity controls.

Operationally, AI-powered attacks are not “a new type of breach.” They are an efficiency upgrade on familiar compromise paths: phishing that reads like internal communications, executive impersonation that survives quick voice checks, and fraud workflows that exploit gaps between identity systems, finance procedures, and SOC visibility. The strategic risk is that trust signals (voice, writing style, meeting context, vendor email threads) are now cheaper to counterfeit, while verification controls remain inconsistent across departments and third parties.

AI-Powered Attacks Statistics refer to quantified data about attacks in which adversaries use artificial intelligence, generative AI, or AI-assisted automation to improve the realism, scale, speed, targeting, or effectiveness of malicious activity, including phishing, impersonation, fraud, and other cyber-enabled abuse patterns.

AI-powered attack measurement is fragmented because it describes a mechanism (AI assistance), not a single observable event type. In practice, statistics fall into several categories.

Complaint and loss datasets measure what victims report and what they believe happened. The FBI IC3 dataset is a strong example: it tracks complaints and reported losses by category (phishing, BEC, investment fraud, etc.), which is useful for understanding financial impact, but it does not reliably attribute whether AI was used in the content generation or identity manipulation step.

Enterprise detection signals measure what security tooling is observed. Verizon’s breach research includes an explicit statement that synthetic text in malicious emails doubled over two years (based on partner-provided data), which is closer to “AI content observed in attacks,” but still depends on the detection method used to classify text as synthetic.

Breach research and surveys measure patterns across organizations that experienced breaches. IBM/Ponemon’s work is particularly relevant in 2026 because it explicitly quantifies attacker AI use in breaches (16%) and breaks down the AI-driven attack types (phishing/synthetic communication vs deepfakes).

A practical example helps separate these lenses. A reported AI-enabled fraud complaint might be a victim telling IC3 they received a realistic voice call “from the CEO” requesting a transfer (complaint + loss). An AI-generated phishing detection might be an email security stack classifying a message as synthetic text or highly machine-generated (telemetry). A deepfake impersonation case might be a finance team receiving a voice message, later found to be cloned audio, that bypassed a weak callback process (case pattern). Full enterprise business impact is the combined cost of containment, downtime, investigation, legal exposure, and remediation captured more directly in breach cost research.

The key discipline: surveys, fraud complaints, threat intelligence observations, and breach datasets are not interchangeable. They answer different questions, on different populations, with different biases (underreporting, detection gaps, and inconsistent AI attribution).

Global AI-powered attack statistics are best understood as a convergence of three forces: rising synthetic content in social engineering, a widened governance gap (shadow AI and unmanaged accounts), and increasing executive concern about AI risk.

| Metric | 2025 | 2026 or Latest Available | Trend | Notes |

|---|---|---|---|---|

| Organizations assessing AI tool security before deployment | 37% | 64% | Up | Global survey measure of governance/process maturity, not attack volume. |

| Share of breaches involving attackers using AI | N/A (newly quantified) | 16% | Baseline established | From breach research; describes breaches with reported attacker AI use. |

| AI-driven attack types in AI-involved breaches: phishing/synthetic comms | N/A | 37% | Baseline established | Share within AI-driven attack type breakdown in breaches. |

| AI-driven attack types in AI-involved breaches: deepfakes | N/A | 35% | Baseline established | Share within AI-driven attack type breakdown in breaches. |

| Employees routinely accessing GenAI on corporate devices | 15% | Latest: 15% (as of early 2025) | Baseline | Exposure signal indicates unmanaged AI identities and potential data leakage paths. |

| GenAI accounts on corporate devices outside enterprise identity controls | 72% personal email / 17% corporate without integrated authentication | Latest: same (as of early 2025) | Baseline | A proxy for logging, DLP, and SSO enforcement gaps. |

| AI-supported phishing share of observed social engineering activity | N/A | “>80%” (reported, early 2025) | Baseline | Reported in ENISA with methodological caveats and “reportedly” qualifier. |

Sources for the metrics above include IBM/Ponemon breach research, Verizon breach investigations, ENISA threat landscape reporting, and a global executive survey on cybersecurity.

Interpretation: the most meaningful shift is not “every attack is AI-powered,” but that AI is measurably present in a non-trivial portion of breaches (16% in IBM’s dataset) and is disproportionately concentrated in human manipulation phishing and deepfakes where enterprise controls are often weakest (verification, approvals, and identity proofing). A second shift is governance: organizations are increasing pre-deployment AI security assessment (37% to 64%), but Verizon’s identity evidence shows that real usage still bypasses corporate identity controls (personal accounts, non-integrated auth). Finally, ENISA’s “reportedly >80%” figure should be treated as an external reporting-based indicator rather than a universal measurement, but it aligns directionally with Verizon’s synthetic text observations.

AI-powered attacks create two different cost curves: direct fraud loss (money transferred out) and enterprise breach/response cost (containment, investigation, downtime, legal exposure). Conflating them leads to bad decisions particularly in budgeting, insurance discussions, and board reporting.

In breach economics, IBM/Ponemon reports a 2025 global average breach cost of $4.44M, down 9% from $4.88M the prior year, with faster identification and containment cited as a contributor. Within the same dataset, the average breach cost where AI was involved in the execution of the security incident is reported at $4.49M, while breaches involving shadow AI are higher at $4.63M. That delta matters: shadow AI is not just “policy noncompliance,” it is an additional unmonitored interface into sensitive data and workflows.

On fraud losses, the FBI IC3 dataset is the most defensible public baseline for reported losses in the US: $2.77B in reported losses tied to Business Email Compromise in 2024, alongside $16.6B in total losses across complaint types. BEC itself is not inherently “AI-powered,” but it is structurally well-suited to AI: the attacker’s bottleneck is language quality, personalization, and rapid iteration exactly what generative models reduce.

| Indicator | Value | Change YoY | Notes |

|---|---|---|---|

| Global average cost of a data breach (all causes) | $4.44M | -9% (vs $4.88M) | Enterprise cost model; includes investigation, response, lost business, etc. |

| Breach cost where AI was involved in executing the incident | $4.49M | N/A N/A | Average cost in the breach research dataset. |

| Breach cost involving shadow AI | $4.63M | N/A | Higher than other AI-related categories in the same dataset. |

| Added breach cost for high shadow AI exposure | +$670K | N/A | Difference between high vs low/no shadow AI environments. |

| Added breach cost for breaches involving shadow AI (global average lift) | +$200K | N/A | Increment above global average breach cost. |

| Total reported losses (US complaints, all categories) | $16.6B | +33% | Complaint-reported losses; not total global cyber loss. |

| Reported BEC losses (US complaints) | $2.77B | -6% (vs $2.95B) | Fraud loss; excludes many indirect enterprise costs. |

| Median ransomware payment (DBIR dataset) | $115,000 | N/A | Payment metric; distinct from total incident cost. |

Sources: IBM/Ponemon breach cost research; FBI IC3 complaint reporting; Verizon breach investigations.

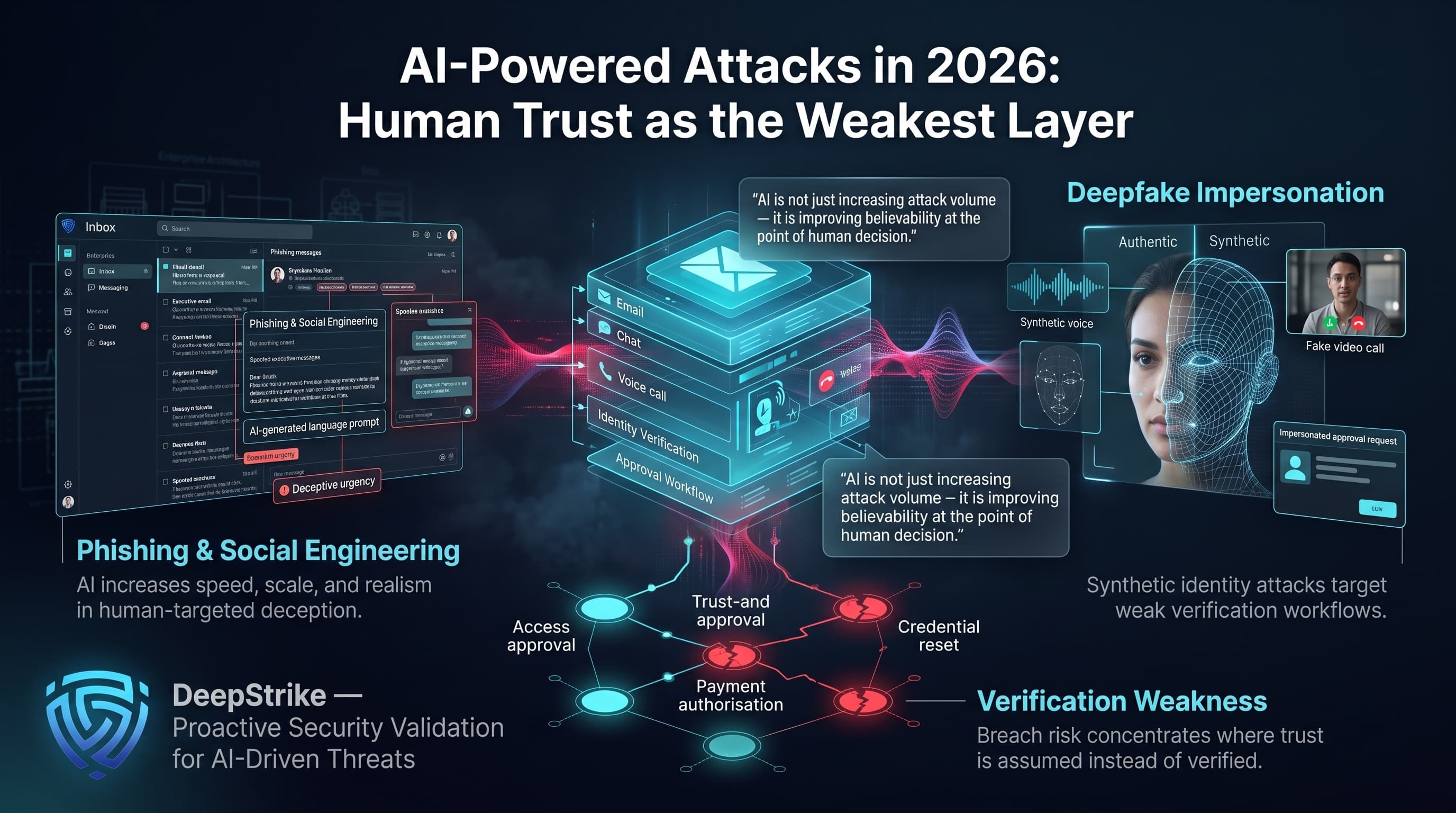

AI materially changes phishing economics by collapsing the time cost of personalization, localization, and iteration. Verizon explicitly reports synthetic text in malicious emails doubling over two years, which is consistent with increased attacker capability to generate high-variance lures that evade rule-based detections.

Controlled human-subject research supports the operational impact. A 2024 study evaluating fully automated spear phishing reported a 54% click-through rate for AI-generated emails compared to 12% for a control group of arbitrary phishing emails, with human-expert phishing also at 54% and human-in-the-loop AI at 56%. This is not “global prevalence,” but it is decision-grade evidence that AI closes the quality gap that awareness programs historically depended on (poor grammar, cultural mismatches, inconsistent tone).

Operational meaning: treat phishing as an identity event, not an email event because the downstream objective is credential capture, session hijack, or approval manipulation.

Deepfakes are no longer limited to disinformation; they are a fraud primitive. IBM/Ponemon breach research reports deepfake attacks account for 35% of AI-driven attack types in breaches involving attacker AI use. Financial regulators also moved from “theory” to “reporting”: FinCEN issued an alert describing observed increases in suspicious activity reporting that references deepfake media, including deepfake identity documents used to circumvent identity verification and authentication in financial institutions.

Operational meaning: voice is no longer a sufficient authentication factor for executives, vendors, or help desks. If an approval process accepts a voice message as a “strong signal,” it is already behind the threat curve. Switzerland’s national cyber authority explicitly reports deepfake audio calls and voice messages being used in CEO fraud scenarios, including multi-million-franc losses in at least one case.

BEC remains a top fraud category by reported losses, with $2.77B reported in the US complaint dataset for 2024. AI doesn’t invent BEC; it improves the pretext layer: consistent tone, internal jargon, multilingual phrasing, and rapid rewriting that reduces defender reliance on static indicators.

Verizon’s findings on GenAI usage (15% of employees routinely accessing GenAI on corporate devices, with 72% using personal email identifiers and 17% using corporate emails without integrated authentication) matters here because it increases the chance that sensitive language, invoice templates, vendor details, and project context are exposed to tooling outside enterprise governance. That exposure increases the realism of attacker-crafted messages once any mailbox history, CRM exports, or shared documents are compromised.

AI-enabled social engineering increases the success rate of identity attacks even when the “technical” breach is simple: credential stuffing, session token theft, password resets, and help desk manipulation.

Two signals matter. First, Verizon reports infostealer credential logs show 30% of compromised systems were enterprise-licensed devices, and 46% of compromised systems with corporate logins were non-managed, an identity sprawl problem that fuels account takeover attempts. Second, FinCEN explicitly notes deepfake identity documents being used to bypass identity verification in account opening and related fraud schemes showing synthetic identity is no longer theoretical in regulated environments.

Operational meaning: if identity proofing is inconsistent between HR onboarding, IT support, and finance/vendor management, AI will amplify the weakest link. For more context on identity compromise drivers, see DeepStrike’s write-up on compromised credential trends.

The most reliable evidence for “AI reconnaissance” in enterprise crime is not autonomous vulnerability exploitation; it is better preparation for manipulation. Switzerland’s national cyber authority describes CEO fraud preparation explicitly: attackers analyze public sources (company sites, social platforms, registries) to identify hierarchy, responsibilities, and absences, then use AI tools to mimic writing style and phrasing.

Operational meaning: the attacker’s prep phase is increasingly fast and repeatable. Security programs should treat executive communications metadata (who approves what; who is traveling; who changes bank details) as sensitive operational data, not “public relations content.”

A useful way to view AI-powered attack chains is as multi-step workflows that combine research, synthetic content generation, trust exploitation, and either credential capture or payment diversion. The statistics below mix breach research (what happened in breached organizations) and enterprise exposure signals (how likely it is that a workflow can be abused).

| Vector / Method | Share of Incidents or Relevance | Avg Impact / Cost | Notes |

|---|---|---|---|

| AI-generated phishing or synthetic comms | 37% (within AI-driven attack types in breaches) | $4.49M avg breach cost (AI-in-execution category) | Share is within the subset of breaches involving attacker AI use. |

| AI deepfake attacks | 35% (within AI-driven attack types in breaches) | $4.49M avg breach cost (AI-in-execution category) | Deepfakes appear as a major manipulation method where AI is used. |

| Shadow AI exposure (unsanctioned AI in breach) | 20% of orgs reported breach due to shadow AI | +$200K average lift; +$670K in high exposure | Cost and prevalence are from breach research survey results. |

| GenAI access outside enterprise identity controls | High relevance | N/A | 72% personal email identifiers; 17% corporate without integrated auth in one dataset. |

| CEO fraud with AI voice cloning | High relevance | “Several million” CHF loss (case example) | Country-specific reporting indicates real-world operationalization. |

Sources: IBM/Ponemon breach research; Verizon DBIR; Switzerland NCSC reporting.

Attack-chain mapping (practitioner view): AI often improves steps that sit outside classic endpoint telemetry message realism, conversation continuity, urgency cues, and roleplay so defenders should map controls to the workflow, not the channel. A typical chain is: target research → synthetic message generation → trust trigger (urgency/authority) → credential capture or approval manipulation → payment diversion or access expansion → follow-on fraud and clean-up.

Industry exposure to AI-powered attacks depends heavily on workflow shape: payment urgency, customer onboarding volume, reliance on approval chains, and identity sprawl across SaaS and third parties. Verizon’s breach dataset spans 139 countries and provides incident/breach frequency and dominant patterns by sector, which is useful for relative exposure framing.

| Industry | Relative Exposure Level | Typical Impact Pattern | Key Notes |

|---|---|---|---|

| Healthcare | High | Ransomware disruption + identity-driven access abuse | High operational urgency; sensitive data; breach reporting obligations create visibility. |

| Finance | High | BEC, payment diversion, identity and account fraud | High-value transactions; high social engineering pressure; strong fraud reporting signal. |

| Technology | High | Account takeover, session hijack, support/social engineering | Identity sprawl, SaaS token abuse, and public-facing support processes expand the impersonation surface. |

| Manufacturing | Medium–High | Ransomware + supplier and invoice fraud | Supplier dependency, OT/IT disruption costs, and executive urgency increase coercion success. |

| Retail | Medium–High | Payment fraud + credential theft + customer-facing impersonation | High-volume customer identity workflows; fraud blending with customer support. |

| Government / Public Sector | High | Credential compromise + impersonation + disruption | Public trust impact; large identity graphs; frequent social engineering and coercion attempts. |

Verizon’s sector breakdown shows, for example, Finance and Insurance with 3,336 incidents and 927 breaches, Healthcare with 1,710 incidents and 1,542 breaches, and Public Sector with ransomware present in a notable portion of government breaches context for why these sectors remain heavily targeted even before you isolate AI assistance. For a testing strategy baseline, see DeepStrike’s overview of continuous penetration testing.

For global risk planning, regional comparisons are currently stronger in surveys, complaint systems, and cost-of-breach research than in consistent AI-attribution telemetry. A useful approach is to combine (1) regional fraud exposure sentiment, (2) observable complaint reporting, and (3) breach-cost baselines while explicitly flagging that none of these alone measures “AI-powered attack prevalence.”

| Region | Key Trend | Cost or Impact Signal | Notes |

|---|---|---|---|

| North America | High reported exposure to scams and fraud | 79% reported exposure to digital scams | Survey measure; also strongest complaint-reporting infrastructure (e.g., IC3 for US). |

| Europe and Central Asia | High fraud exposure and regulatory complexity | (Survey shows high exposure; regulatory effectiveness perception varies) | ENISA provides AI-assisted phishing indicators; national cyber centers report CEO fraud adaptation. |

| Sub-Saharan Africa | Highest reported exposure in survey | 82% reported exposure to digital scams | Survey measure; reflects scale of fraud exposure, not AI-specific share. |

| East Asia and Pacific | High fraud exposure in survey | (Within the range shown in survey) | Often high cross-border fraud exposure; measurement varies. |

| Latin America and the Caribbean | High fraud exposure and skills shortages cited | (Within the range shown in survey) | The survey indicates talent gaps and fraud exposure as major issues. |

| Middle East and North Africa | High fraud exposure in survey | (Within the range shown in survey) | High digital adoption; fraud and impersonation remain active. |

In the World Economic Forum’s Global Cybersecurity Outlook 2026 survey, cyber-enabled fraud is described as “reaching record highs,” with 82% of respondents in sub-Saharan Africa reporting exposure to digital scams and 79% in North America. These are exposure signals, not confirmed incident counts. For AI-specific indicators, ENISA reports that AI-supported phishing “reportedly” represented more than 80% of observed social engineering activity worldwide by early 2025, but the report attributes this as reported information and does not present it as a universally measured rate.

Public disclosure of AI-assisted incidents remains inconsistent, so the most useful case examples come from national cyber authorities, regulator alerts, and well-documented fraud reporting. These examples are valuable not because they prove universal prevalence, but because they show how synthetic impersonation is already being operationalized in payment, identity, and executive-trust workflows.

Switzerland’s national cyber authority reported CEO fraud as one of the most frequently reported fraud types, with reported cases rising from 719 to 971 year over year, and explicitly notes attackers are “increasingly” using AI including deepfake audio calls and voice messages in real scam operations.

A widely reported enterprise deepfake case (Hong Kong, public police reporting via media coverage) involved a finance employee transferring approximately $25 million after participating in a video call where multiple “colleagues” and leadership figures were later determined to be synthetic. This illustrates a high-confidence pattern: multi-party deepfake presence is used to defeat the “sanity check” step (asking a colleague on the same call).

FinCEN issued a financial sector alert describing observed increases in suspicious activity reporting that referenced deepfake media, including deepfake identity documents used to circumvent identity verification and authentication. While it does not provide counts, it is a regulator-grade confirmation that deepfakes are appearing in monitored fraud workflows.

The FBI has issued multiple public warnings describing criminal use of generative AI in voice, video, and impersonation-driven fraud schemes, reinforcing that synthetic impersonation is now active across both enterprise fraud and higher-sensitivity public-sector targeting.

Trend selection should stay grounded in what is observable, not what is imaginable.

AI-driven human manipulation is measurable in breach datasets. IBM/Ponemon reports that when attacker AI use was present in breaches, it was largely concentrated in phishing/synthetic communications and deepfake attacks, reinforcing that adversaries are attacking the human layer and verification workflows. The implied security shift is toward stronger identity verification (process + tooling) rather than solely better malware detection.

Shadow AI is a quantifiable cost amplifier. IBM/Ponemon reports that 20% of organizations said they suffered a breach due to security incidents involving shadow AI, with added costs and longer containment timelines, and higher average breach cost where shadow AI was present. The implication is architectural: AI adoption must be treated as a new identity perimeter (accounts, tokens, plugins, connectors) with logging, access controls, and audit.

Verification is being attacked across channels. Switzerland’s cyber authority reports CEO fraud attempts shifting beyond email to WhatsApp and phone, and highlights deepfake audio as a real observed vector while noting manipulated video conferences are seen but remain more complex. This supports a pragmatic trend: attackers choose the cheapest synthetic channel that still closes the deal.

| Attribute | AI-Powered Attacks | Traditional Social Engineering |

|---|---|---|

| Message Scale | High-volume personalization becomes feasible | Scale is limited by human writing time |

| Personalization | Easier to tailor to org tone, role, and context | Often generic or inconsistently tailored |

| Language Quality | High; fewer “tells” (grammar, localization errors) | Often includes linguistic artifacts |

| Impersonation Realism | Higher with voice cloning and synthetic media | Relies on email spoofing and human roleplay |

| Detection Difficulty | Higher for rule-based and keyword systems | More detectable via patterns and errors |

| Operational Cost to Attacker | Lower marginal cost per additional targeted user | Higher marginal cost with each target |

| Business Impact Potential | High where workflows accept weak verification | High, but typically requires more attacker effort |

Research validates that AI can achieve spear-phishing performance comparable to human experts in controlled testing, which changes the economics even if the underlying fraud mechanics remain familiar (credentials and approvals).

The operational conclusion from the latest data is that AI-powered attacks disproportionately exploit verification gaps rather than purely technical control gaps. Most enterprises still design controls assuming humans can reliably detect manipulation, but controlled testing shows AI can remove many of the cues users previously relied on.

Identity verification workflows should be treated as frontline security controls. If 72% of GenAI access accounts on corporate devices are personal emails, and another 17% are corporate emails without integrated authentication, then logging, DLP, and policy enforcement are structurally bypassed for a meaningful slice of employee AI usage. This is not only a data leakage problem; it also increases the realism of outbound fraud once attackers gain internal context such as mailbox content, vendor templates, or shared documents.

Executive protection should be reframed as “approval integrity.” Switzerland’s reporting indicates deepfake audio is already used in CEO fraud, and IBM’s breach research indicates deepfakes are a substantial fraction of AI-driven attack types in breaches. The board-relevant metric is not “deepfake count.” It is: how often do we allow money movement, vendor bank detail changes, or privilege grants based on low-assurance communications?

Budget allocation should follow cost amplifiers. IBM/Ponemon identifies shadow AI as a cost amplifier (+$200K average; +$670K in high exposure), suggesting that governance and inventory of AI usage is not compliance theatre, it is loss control. To operationalize these findings, use validation methods that reflect real attacker behavior: social engineering testing, adversary simulation, and continuous assessment of approval workflows and help-desk proofing. For a testing strategy baseline, see DeepStrike’s overview of continuous penetration testing.

Phishing-resistant MFA is necessary, but it is not sufficient when the attacker’s goal is to coerce an authorized user into performing a high-impact action such as payment release, bank-detail change, or privilege grant. The controls that reduce AI-powered attack loss are the ones that harden verification at decision points.

Implement phishing-resistant authentication (passkeys/hardware-backed) for high-risk roles, but pair it with session protection and conditional access. Verizon’s data shows the identity layer remains a dominant attack surface (credential exposure via non-managed devices; infostealer presence), so reducing credential replay must be continuous, not a one-time rollout.

Enforce out-of-band payment verification with known-good channels, not reply-to email and not phone numbers inside messages. Switzerland’s cyber authority explicitly recommends verification via a second channel using a number you already know, and dual control for payments and master-data changes.

Harden help desk identity-proofing. AI makes it easier to simulate tone, urgency, and context; therefore help desk processes should require higher-assurance proofs for account recovery, MFA resets, and privileged changes (known device signals, verified manager approval paths, and strict logging). This is where AI-driven social engineering tends to convert into durable access.

Reduce shadow AI by making sanctioned tooling usable and monitored. IBM/Ponemon reports that nearly two-thirds of organizations lacked AI governance policies, while breaches involving shadow AI carry higher costs and longer containment. Technically, this means: SSO integration, policy-managed access, connector governance, prompt and output logging where feasible, and DLP controls on sensitive data classes.

Instrument executive impersonation monitoring. Watch for look-alike domains, unusual payment urgency, and multi-channel escalation patterns (email → WhatsApp → call). Switzerland’s reporting highlights typosquatting and cross-channel escalation; your monitoring and training should match that reality. For a deeper look at voice-channel risk patterns, see DeepStrike’s vishing statistics research.

Run tabletop exercises that combine cyber + fraud. Many organizations still split these functions; AI-powered attacks exploit the gap. Tabletop scenarios should include: deepfake voice request for urgent payment, vendor bank change with “confidential” pressure, help desk reset requested by “executive,” and shadow AI data leakage leading to high-quality internal pretexts. IBM/Ponemon’s finding that 86% of breaches cause operational disruption supports treating these as resilience exercises, not awareness exercises.

Expected Loss = Probability × Impact

AI-powered attacks statistics help you estimate both sides of the equation if you use them correctly.

Probability is informed by exposure signals and observed prevalence in datasets. For example, if Verizon identifies that 15% of employees routinely use GenAI tools on corporate devices and most accounts are outside enterprise identity controls, that raises the probability that sensitive internal context can leak or that AI tooling becomes an unmanaged communication surface. If IBM/Ponemon finds 16% of breaches in its research involve attackers using AI, that provides a baseline for how frequently attacker AI use appears in breach outcomes (within that study population).

Impact is quantified through breach cost benchmarks and fraud-loss baselines. IBM/Ponemon’s global breach cost averages provide a defensible impact anchor for enterprise breach events, while IC3’s BEC loss reporting provides a defensible (but incomplete) anchor for direct fraud loss in the US reporting population.

Illustrative example (numbers labeled illustrative): Assume a business unit processes $500M/year in vendor payments. If your internal analysis estimates a 1% annual probability of a successful payment diversion event enabled by impersonation, and the expected loss per event is $750K (direct loss + response), then expected loss is $7.5M/year. If you implement dual control + known-channel verification and reduce probability by half (to 0.5%), expected loss drops to $3.75M/year, an annual reduction of $3.75M. The key is to tie probability reductions to specific controls at decision points, not general “awareness.”

This framing also supports cyber insurance discussions: cost amplifiers such as shadow AI (+$200K average lift; +$670K in high exposure environments) are the type of factor underwriters increasingly ask you for evidence with governance, logging, and audits.

They are quantified measurements (from breach research, telemetry, complaints, or surveys) describing attacks where adversaries use AI to improve realism, scale, speed, or targeting especially in phishing, impersonation, and fraud.

In IBM/Ponemon breach research, 16% of breaches involved attackers using AI but this is a study-specific measure, not a global prevalence rate.

AI improves language quality, personalization, and rapid variation. Controlled testing found AI-generated spear phishing achieved click-through rates comparable to human experts (54% vs 12% control).

Regulators and national cyber authorities have issued alerts describing deepfake use in fraud and identity bypass workflows, and breach research identifies deepfakes as a major AI-driven attack type in breaches involving attacker AI.

Industries with high payment velocity, high identity volume, and heavy reliance on email approvals (finance, healthcare, government, and many technology/service firms) tend to be more exposed because AI amplifies impersonation and approval manipulation.

The workflow is similar (trust → action), but AI reduces attacker effort and increases realism across language and voice, making weak verification controls fail more often.

Harden verification at decision points: dual control for payments, known-channel callbacks, stronger help-desk identity proofing, phishing-resistant authentication for high-risk roles, and governance to reduce shadow AI.

Because sources measure different things (complaints vs detections vs breach research), use different populations, and often cannot directly confirm whether AI was used unless the incident was investigated at depth.

The most reliable AI-powered attacks statistics available going into 2026 point to a clear reality: AI is measurably present in breach outcomes and is concentrated in human manipulation, phishing and deepfake impersonation where enterprise verification workflows are weakest.

This is not a claim that all attacks are now AI-powered. It is a measured statement that trust signals are cheaper to counterfeit, and that governance gaps such as shadow AI, unmanaged accounts, and weak approval verification now translate directly into fraud and breach cost. Enterprises should use these AI-powered attacks statistics to prioritize identity verification, payment approval integrity, help desk proofing, executive impersonation monitoring, and incident preparedness that spans both cyber and fraud response.

Mohammed Khalil is a Cybersecurity Architect at DeepStrike, specializing in advanced penetration testing and offensive security operations. With certifications including CISSP, OSCP, and OSWE, he has led numerous red team engagements for Fortune 500 companies, focusing on cloud security, application vulnerabilities, and adversary emulation. His work involves dissecting complex attack chains and developing resilient defense strategies for clients in the finance, healthcare, and technology sectors.

Stay secure with DeepStrike penetration testing services. Reach out for a quote or customized technical proposal today

Contact Us